Karpathy Says Agents Are A Decade Out. Good — Your Data Isn’t Ready Either.

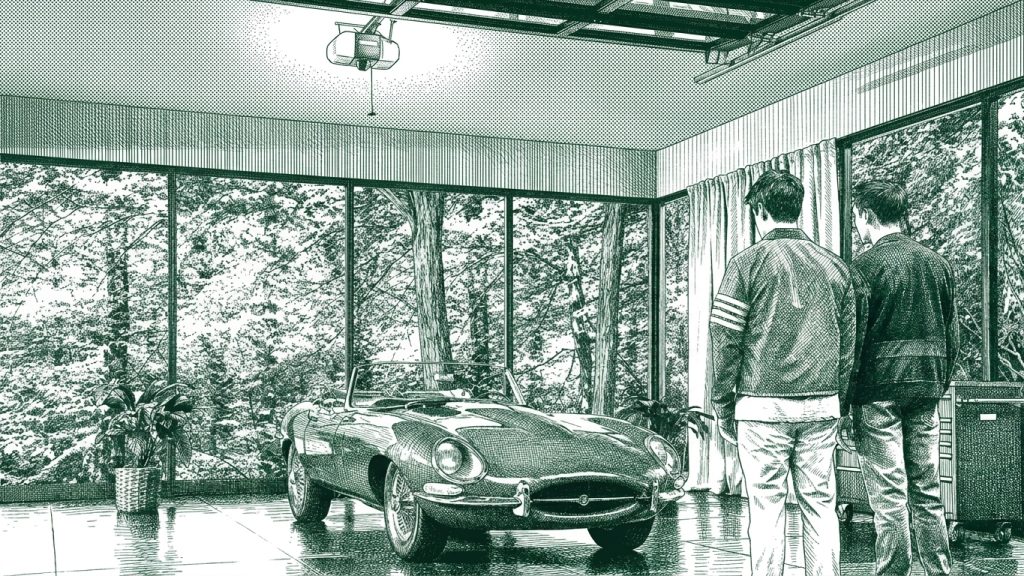

The architects keep telling us the architecture isn't there yet. They're right. They also just bought every operator a window. The buyers panicking about AGI in two years are the buyers who got handed Cameron Frye's father's Ferrari and are afraid to take it out of the garage.

THE NUMBER: 10 — the years Andrej Karpathy spent two and a half hours on Dwarkesh Patel’s podcast explaining that truly capable AI agents will take to actually arrive. Roughly the same number of years your average Fortune 1000 will need to learn how to drive the Porsche they already bought. “You can never replace this. You can never. Never. Ever. Replace it.” That’s Cameron Frye in 1986, looking at his father’s 1961 Ferrari 250 GT California, the mileage running backward on blocks. The Porsche analog this week is the frontier model. The blocks are your data architecture. The mileage running backward is the productivity paradox. And Karpathy just told you, on the record, that you have a decade.

Andrej Karpathy — OpenAI co-founder, ex-Tesla AI lead, the guy who literally wrote Software 2.0 — sat down with Dwarkesh Patel over the weekend and said agents are a decade out. Not next year. Not next quarter. Ten years. The bottlenecks he listed: not intelligent enough, not multimodal enough, can’t do reliable computer use, no continual learning. Each one a multi-year research program. The interview cleared 100K views and Aligned News’ editorial brief tonight calls it the most honest AI conversation happening right now. Karpathy is the third architect to say it this week. Demis Hassabis has said it five times by Aligned’s count — AGI doesn’t show up until 2030. David Silver, the man who built AlphaGo, raised $1.1 billion at $5.1 billion last week for Ineffable Intelligence on the explicit thesis that the LLM corpus has a floor — he didn’t have to say it; he wrote a check that said it.

Same Sunday Karpathy’s interview was trending: OpenAI announced GPT-6 has completed pre-training at Stargate and is now in alignment. The product division was renamed the “AGI Deployment Division.” OpenAI is calling GPT-5.5 a new class of intelligence. Anthropic crossed $1 trillion in private valuation this week, passing OpenAI for the first time, and is reportedly raising at $900B. The Five Eyes intelligence services published a joint advisory telling enterprises to use agents only for low-risk, non-sensitive tasks. The Mythos cybersecurity model — “too dangerous to release” — got breached by a URL. And a developer on GitHub named aattaran shipped a tool called deepclaude that swaps Claude Code’s autonomous agent loop onto DeepSeek V4 Pro for 17x less money, no quality loss.

Two opposite headlines, same story underneath. The capability is real and accelerating. The reliability is real and lagging. The price is collapsing. The buyer is still on the bicycle. Karpathy didn’t just bear-call the consensus. He handed every CIO a permission slip. Stop trying to hire agents. Start supervising them. The decade he just gave you is for getting your house in order — top-down adoption, expertise inside the building, a corporate brain that knows what your company actually sounds like, and proprietary data architected for an agent to read. None of those is technology. All of them are work. And the operator who does the work in 2026 is the operator who’s running the Ferrari at 200 miles an hour in 2030, while the operator who waited for AGI is the one explaining to the board why the odometer ran backward.

🧠 Three Architects In A Row, And Nobody On Wall Street Is Listening

Karpathy is the news. The pattern is the issue. In nine days, three of the most credentialed AI architects on earth have publicly contradicted the consensus deployment narrative — using different words, from different vantage points, against the same wall of capital.

Hassabis (Google DeepMind, Nobel in Chemistry, the man who folded 200 million proteins) keeps saying AGI shows up in 2030. He has said it so many times that Aligned News has stopped writing it as news. Silver (DeepMind, AlphaGo, AlphaZero, AlphaFold) raised $1.1B at $5.1B for a brand-new pre-product lab whose explicit thesis is that the LLM training corpus has a floor — and the investors writing his check (Sequoia, Lightspeed, Nvidia, Google, DST, Index) are the same firms cheerleading roughly $1.8 trillion of consensus 2026-2028 hyperscaler capex on the opposite thesis. Now Karpathy lays out, in his most operational interview to date, exactly why the architecture is going to take a decade to do what the marketing department says it does this quarter — agents lack intelligence, multimodality, computer use, and continual learning. Each one is a research program. None of them ships in two quarters.

Three architects, same testimony, against $1.8 trillion of committed spend. That is no longer a contrarian take. That is an emerging consensus among the people who built the thing. And it’s directly contradicting the consensus among the people pricing it. Same week the architects spoke, Anthropic crossed $1T on a safety pitch, the Five Eyes told enterprises to keep agents on a short leash, and Mythos — Anthropic’s “too dangerous to release” cyber model that found 271 zero-days in Firefox alone — got compromised by a URL exploit. Bronze-Age access control on a 2026 frontier model. The reliability gap Karpathy is describing isn’t a research curiosity. It’s a procurement risk that already showed up in production.

We’ve seen this movie before. The labs paying themselves to be gods are the same labs whose own engineers are telling Dwarkesh, on tape, that the architecture isn’t ready. Don’t get attached to anything you can’t walk out on in 30 seconds flat. De Niro said that across a diner table in 1995. Karpathy said the same thing this weekend with different words. The smart money is hedged. The retail money is buying the new product line.

Why this matters: If you’re a CIO sitting on a 2026 vendor map, the ten-year timeline isn’t bad news. It’s clarity. It means the people who actually build these systems don’t expect the architecture to ship a one-shot replacement for your engineering org in this fiscal year — or the next four. That gives you a window your CFO can plan against. The right conversation in the next board meeting isn’t which AGI vendor do we standardize on. It’s what do we need to do over the next 36 months so we’re ready to use what these systems can actually do today, plus what they’ll be able to do in 2027, plus what they won’t be able to do until 2032? Plan for the decade Karpathy gave you. Don’t price the marketing.

🦞 You Bought A Porsche. You’re Driving It Like A Bicycle.

Bob Solow’s old line about computers — you can see the computer age everywhere except in the productivity statistics — applies word-for-word to AI in 2026. Programmers ship more code. Customer service tickets close faster. Internal Google reporting says three quarters of new commits are AI-written. None of this has moved output per hour in the macro data. The capability is real. The output isn’t following.

Here’s why. Walk into a hundred mid-cap CEOs’ offices and ask four questions. Have you architected your data so an agent can read it? Blank stare. What’s your token budget for the year? Disdainful look — like you asked them their cholesterol number. Who owns your corporate brain — the one that tells the agent how this company sounds, what it stands for, what it would never do? Long pause. How many of your senior people can drive Claude or GPT past first-page autocomplete? A number you can count on one hand, in a 500-person company.

That’s the gap. The frontier labs ship Mythos, which finds 271 zero-days in Firefox. They ship GPT-5.5, which OpenAI is calling a new class of intelligence. They ship Claude Code, which writes 4% of all GitHub commits. They ship Codex, which Every just profiled going to work end-to-end. The capability is German engineering. The pricing is increasingly Yugo. And the buyer is using it to write three-paragraph emails and summarize meeting transcripts. “There are simply too many notes, Mozart. Just cut a few and it will be perfect.” Most enterprise users aren’t even hearing the music being played, let alone learning to direct it.

Four things have to be true for the productivity stats to start matching the capability ramp, and almost no company has all four:

- Top-down adoption. Not a pilot in IT. The CEO uses it. The board uses it. The org chart is rewritten around what the new tool does. (Most companies: pilots in IT, ignored by the C-suite.)

- Expertise. Senior people who can drive the car. Not “took a workshop.” Spent six months actually shipping work with it. (Most companies: zero internal experts. The juniors know more than the executives.)

- A corporate brain. A documented, machine-readable representation of what your company is, how it speaks, what it values, what it refuses to do — the institutional voice in a form an agent can actually use. (Most companies: a brand book PDF nobody opened in three years.)

- Clean, architected, proprietary data. Not the SAP install. Your data — your customers, your contracts, your products, your history — structured for an agent to consume. (Most companies: 47 spreadsheets, 12 SaaS silos, three Salesforce instances and a shared drive nobody trusts.)

Without those four, the Porsche never leaves the driveway. With them, the same Porsche becomes the operating layer of the company. The Karpathy decade is the time the operator has to build the four things. And the operator who treats it as a window — who hires the foreman, gets the data house in order, builds the corporate brain, and gets the CEO to actually use the tool herself — is the operator who’s running circles around the competitor that waited for the architecture to do the work for them.

The action item: Take the next 90 days and write down — in writing, with names attached — who in your company owns each of the four things. Adoption owner. Expertise owner. Corporate brain owner. Data architecture owner. If any of those four lines is blank, you’re not behind on AI. You’re not even on the field. The good news, which is the actual good news of the week: the engineering is now Yugo-priced, the timeline is a decade, and there are no points awarded for being early to a race that hasn’t started.

⚡ Build Everything To Be Swapped — deepclaude Is The Lesson

Here’s the most quietly important thing that landed this week. A developer named aattaran put a tool on GitHub called deepclaude. It does one thing. It keeps Claude Code’s autonomous agent loop — the file editing, the bash execution, the subagent spawning, the multi-step coding — and swaps the model underneath from Anthropic’s Claude ($15 per million output tokens, $200/month with usage caps) to DeepSeek V4 Pro ($0.87 per million output tokens, 96.4% on LiveCodeBench). Same UX. 17x cheaper. No quality loss for most coding work.

Aligned News flagged it on the homepage tonight in plain language: the agent platform is becoming the constant. The model underneath is becoming a variable. That is the most important architectural sentence written about enterprise AI this quarter, and it has nothing to do with AGI. It is the operational version of every distribution-is-the-moat argument we’ve been making for two weeks (see Apr 27 and Apr 30) — except now it’s happening on the buyer side. The frontier labs spent three years convincing CIOs that the model was the asset. A weekend hack on GitHub just demonstrated that the agent shell, the harness, the tool loop — whatever you want to call the system the model lives inside — is what compounds. The model is a part you can swap.

The implication for any operator building an AI stack right now is not subtle. Build every layer to be swappable. The model. The vector store. The orchestration. The memory layer. The observability stack. The deployment surface. Assume — because it’s already true, today — that something cheaper, faster, or better will land on every one of those layers within twelve months. The companies that get killed in 2027 are the ones that hard-coded a single vendor’s stack into their operating model in 2026. The companies that win are the ones that built abstraction layers above each component and treated every brand-name dependency as a tenant, not a landlord. Pick your Paulie still applies. But this week’s lesson is that you should pick as many Paulies as your architecture lets you swap between — and you should be working toward a stack you can run on hardware you control, with weights you license, on data you own. Sovereignty over the stack is the ultimate flexibility. That is the moat — not the model.

Here’s what to do: This quarter, walk every senior engineer through your AI stack and circle every component that has exactly one provider. For each circle, ask one question — what would it cost us to switch this in 90 days? If the answer is we can’t, that component is not a tool. It’s a counterparty risk on your balance sheet. Make a 12-month plan to add a fallback for every red circle. The 17x cost reduction deepclaude just proved is what your CFO is going to demand from every layer of the stack within four quarters. Be the company that ships the answer. Don’t be the company that gets handed a contract renewal and discovers you have no leverage.

🔒 The Real Key Is Understanding Your Own Data

There’s a Fortune piece this morning headlined “AI models are choking on junk data.” The piece is making a research point — physical AI and world models need experience data the internet doesn’t have, OpenAI killed Sora because the model couldn’t predict physics, the data labelers (Scale, Surge, Mercor) are producing volume, not quality. Same root cause Karpathy named when he listed his bottlenecks. The labs have a data problem.

The buyer has a worse one. The training data problem is solvable by writing checks to Mercor or by Silver building a new architecture in a basement somewhere. The proprietary data problem inside your company is solvable only by you. Nobody is coming. McKinsey isn’t going to clean your customer table. Salesforce isn’t going to give you a corporate brain. The agent vendor has no incentive to architect your data for portability — the messier your data is, the deeper the lock-in. Your data is your moat, and most companies’ data is a swamp.

Look at what every successful enterprise AI deployment in the last six months has in common. Customers Bank embedded OpenAI engineers inside the bank to build commercial-lending automation — the AI didn’t work until the bank’s data did. Salesforce went up 83% on Jason Lemkin’s bill because his agents read Salesforce’s structured database fields exhaustively, around the clock — they couldn’t read his Notion wikis, so Notion got cancelled. Every drug-discovery agent platform that landed in the last two weeks (Aligned counted five) is just an LLM bolted to a proprietary, structured, expensive-to-build chemistry database. The model is the commodity. The data is the asset. The agents reward whichever vendor’s data is structured for them and quietly abandon whoever’s isn’t. That’s already true. It’s about to get more true every quarter.

So if you’re going to take Karpathy’s decade as a gift, this is the work that fills it. Stack-rank your data assets by how readable they are by an agent today. Treat the most valuable data sets in the company as engineering products with named owners, schemas, version histories, and access controls — not as line items on a SaaS contract. Build the corporate brain in writing, in markdown, in a place a Claude or a GPT or a DeepSeek can read it tomorrow morning without an integration project. The companies that do this work in 2026 are the companies whose Porsche is already on the road in 2027. The companies that don’t are the ones explaining to the board, in 2030, why the architecture finally arrived and they still couldn’t use it.

The strategic read: Forget AGI. Forget the leaderboard. The strategic question for the next four quarters is what are the three data assets in this company that, if I architected them properly for agentic consumption, would compound into a moat? If you can’t answer that question by Friday, get the four people who could in a room and don’t let them out until you can. The Karpathy decade is the runway. Your data is the airplane. Most companies are building neither.

What This Means For You

The architects of the field just gave you a 10-year timeline against a marketing cycle screaming two quarters. Capability is racing. Buyer readiness isn’t. The price of the engineering just collapsed. The window to get serious without being late is open right now and won’t be open in 2028.

Stop pricing the AGI marketing. Start pricing the buyer-readiness gap. The companies that win the next decade aren’t the ones that picked the right model. They’re the ones that built the four things — top-down adoption, in-house expertise, a corporate brain, and clean architected data — while their competitors were arguing about benchmarks.

Build every layer to be swapped. The 17x cost compression deepclaude just demonstrated is coming for every component in your AI stack. Every brand-name vendor in your architecture is a counterparty risk if you can’t replace them in 90 days. Sovereignty over the stack — your weights, your hardware, your data — is the ultimate flexibility, and it’s the only ending where the moat compounds for you instead of the lab.

Treat your data as the product. The labs are the commodity. Your proprietary data, structured for an agent to consume, is what nobody else can replicate. Stack-rank the three data assets that would compound into a moat if you architected them right, name an owner for each, and put a 90-day plan on the calendar.

Hire the foreman, not the AGI. Karpathy’s other line — stop trying to hire agents, start supervising them — is the operating principle for 2026. Every senior hire this year should be evaluated on one question: can this person orchestrate a swarm of agents against our proprietary data, in a way that compounds the moat? If yes, hire them. If no, you’re staffing for the world that’s ending.

The Porsche is in the driveway. The keys are on the counter. Karpathy just told you which way to start the engine. The window is yours. Use it, or watch the operator down the street wave as they pass you on the highway.

Three Questions We Think You Should Be Asking Yourself

Who in this company owns the corporate brain? Not the brand book. Not the style guide. The actual machine-readable representation of how this company thinks, talks, sells, and refuses. If the answer is nobody, your agents will sound like everybody else’s agents — because they’re running on the same models. The corporate brain is the only thing that makes your agent yours. Hire that owner this quarter, or accept that your AI deployment will be undifferentiated from your competitor’s.

Can I swap any single component of my AI stack in 90 days? Walk through every layer — model, agent shell, vector store, orchestration, deployment, observability — and ask the question literally. If even one answer is no, that’s not a feature. That’s a leash, and it gets shorter every quarter. The lesson of deepclaude is that price compression is going to be brutal across every layer, and the companies that hard-coded vendor relationships into their architecture are the ones who’ll pay the brutality price.

If Karpathy is right and we have a decade, what’s the work I do this quarter that I can’t do later? This is the question every CEO should be sitting with on Monday morning. The architecture-doubt window is the one moment when you can do the unsexy work — data hygiene, expertise hiring, top-down adoption, brand voice in writing — without your board asking why you’re not shipping AGI yet. The window closes the day the next architecture lands. You don’t get this quiet again.

It’s the decade of agents, not the year of agents.”

— Andrej Karpathy, on the Dwarkesh Patel podcast, May 2026

— Harry and Anthony

Sources

- Andrej Karpathy — AGI is still a decade away (Dwarkesh Patel)

- OpenAI Co-Founder: AI Agents Are Still 10 Years Away (The New Stack)

- Aligned News — AI homepage (Sunday Night, May 3, 2026)

- Five Eyes joint advisory on agentic AI (Insurance Business NZ)

- Anthropic Project Glasswing — Mythos limited release (NBC)

- “AI tool too dangerous to release could wreak havoc on businesses” (Sydney Morning Herald)

deepclaude— Claude Code agent loop with DeepSeek V4 Pro (GitHub)- Fortune — AI models are choking on junk data

- Codex Goes to Work — Every

- Why we pay Salesforce 83% more — SaaStr / Jason Lemkin

- Ineffable Intelligence — David Silver $1.1B raise

Past Briefings

AI Heat

THE NUMBER: $200 million — roughly what each major venture firm paid for its seat in David Silver's $1.1 billion seed round at Ineffable Intelligence, the AlphaGo creator's pre-product, pre-revenue, pre-architecture-choice company. Less than one percent of fund at Sequoia. Less than one percent at Lightspeed. A line-item rounding error at Nvidia and Google. The same investors are publicly cheerleading roughly $1.8 trillion of committed 2026-2028 hyperscaler capex against the thesis that more compute on the current LLM architecture gets us to AGI. Privately — through Silver's round, through Sakana AI, through Reflection AI, through World Labs — they are...

Apr 29, 2026AI Beats and Backlogs: A Tale of Four Companies

THE NUMBER: $460 billion — Google Cloud's signed backlog at the end of Q1 2026, after it nearly doubled in a single quarter. That's more than two times Google Cloud's trailing-twelve-month revenue. It's the line in tonight's earnings that turned all four hyperscaler reports from a beat into a verdict. The bears spent three years arguing about whether AI demand was real. Tonight, $460 billion in signed contracts answered the question. Now Wall Street is asking the next one — whose AI capex is showing up as AI revenue, and whose is still a roadmap. Google answered it. Meta didn't. Microsoft...

Apr 28, 2026Whose Side Is Sam Altman On?

THE NUMBER: $134 billion — what Elon Musk is asking the court in San Francisco to disgorge from OpenAI and route back to OpenAI's original nonprofit. The number is theatrical. The principle on trial is structural — does the founding promise of an AI lab survive contact with $500 billion of capital? — and it is the same principle every CEO has been quietly betting their headcount on for the last eighteen months. The witness list reads like an alumni directory of the people who actually built the thing: former chief scientists, former CTOs, former alignment leads, the two board...