Lucy

At Ascend, one COO grew ARR 38 percent in six months with zero growth hires and a stack of Claude Code slash commands. Microsoft just published the data showing why most companies can't. The Musk–Altman trial just made clear why their boards won't.

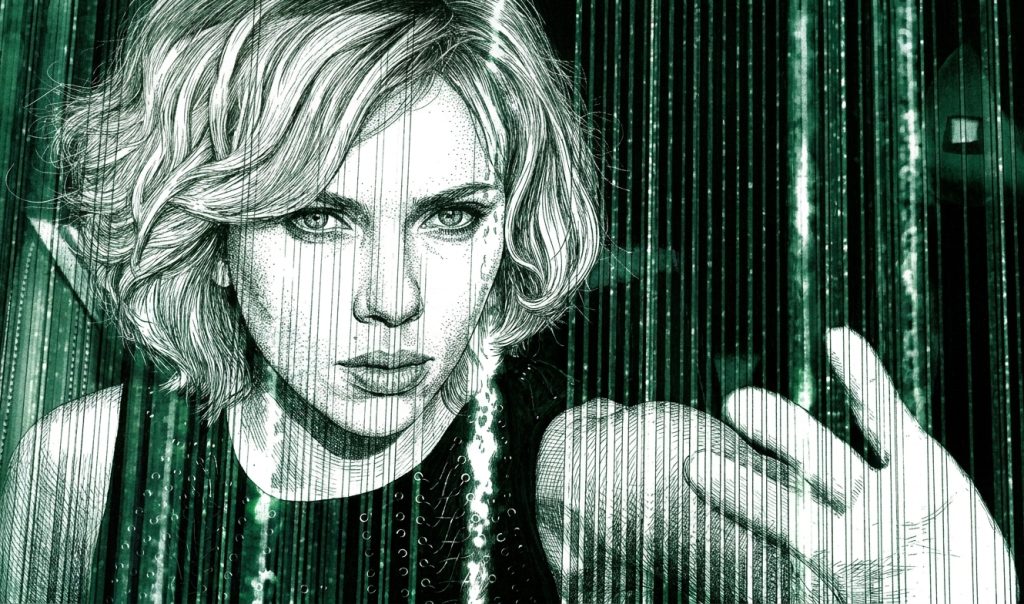

THE NUMBER: 38 and 0 — ARR growth at one mid-market portfolio company over six months, and the number of growth hires required to produce it. The COO of Ascend (formerly FlyFlat), Omar Ismail, walked into a $20M ARR premium travel concierge with 650+ clients and roughly 95 percent of revenue coming from word-of-mouth. Six months later, January was Ascend’s best month on record — $27.6M ARR. ROAS at month two ran ~5x, projecting 8-10x as pipeline matures. Cost per Meta lead $42-45. MQL→booked-call rate 48.7 percent. Bessemer published the full Atlas case study this afternoon. The entire growth engine — ICP analysis from 4,582 bookings, paid Meta and LinkedIn campaigns, three outbound channels, a 22-branch HubSpot attribution rules engine, daily/weekly recurring operations — was built and operated through Claude Code, packaged as reusable slash commands. “Now that the architecture is in place, it will compound over time,” Omar wrote. “What if she could use 100 percent?” That is Morgan Freeman’s Professor Norman in Luc Besson’s Lucy, on stage in Paris, while Scarlett Johansson — hours into the involuntary CPH4 absorption that ends with her dissolving into pure information — accesses successively larger fractions of her own brain. The premise is bad neuroscience. It is also the right cinematic frame for Monday afternoon in May 2026.

Microsoft published its 2026 Work Trend Index at the top of the cycle this morning. Twenty thousand AI users surveyed. Trillions of Microsoft 365 signals analyzed. The lead finding is the most important operator datapoint of the second quarter and almost nobody outside The Neuron and a handful of trade outlets has properly named it yet. Organizational factors — culture, manager support, the way the company is wired to receive AI work — account for 67 percent of the variance in AI outcomes. Individual factors — how AI-fluent the worker is, how deep their prompting practice runs — account for 32 percent. The org chart is 2.1x the determinant of whether AI works at your company. The model layer is downstream. The license bill is downstream. The training program is downstream. The first variable is whether a senior person in the room can describe the workflow, point at the assistant, and ship without waiting for a committee.

That is the entire thesis of the Bessemer piece on Ascend in one paragraph. It is also the entire thesis of every Signal/Noise we have written since Warp Speed, Fast And Slow on April 17. The capability has been unlocked at the individual layer. The organization has not. Eighty-one percent of the workforce, in Microsoft’s own segmentation, is not in the Frontier tier. Only 19 percent is. Ten percent is what Microsoft calls “Blocked” — fluent workers stuck in companies that cannot use what they can do. Half is in the “Emergent” middle, still figuring it out. The professor explains the premise. Lucy, in one office, gets to demonstrate.

Three legs to tonight’s piece. One operator who actually did the thing. One dataset that explains why most companies don’t. Three receipts from the last seven days that, in different domains, confirm the pattern.

🧪 The Operator: Ascend, Six Months, Zero Hires

Omar Ismail joined Ascend (formerly FlyFlat) as COO with what most founders would call a luxury problem. Twenty million in ARR. Six hundred and fifty clients including Google Ventures, Ramp, and Left Lane Capital. A 24/7 travel concierge that customers genuinely loved. A premium product with sticky retention and a referral engine running on its own goodwill. What it did not have was a growth engine. “Essentially 0 percent of revenue came from scalable channels,” per the Bessemer write-up. No paid ads. No email outbound. No programmatic acquisition of any kind. Roughly 95 percent of growth came from word-of-mouth and a handful of community partnerships.

In a different cycle, this is where the COO writes the headcount memo and goes hunting. Hire a VP of Growth. The VP hires a head of paid. The head of paid hires a media planner. The media planner hires a creative strategist. By the time the org chart is shipped, ten months have gone past, two of the hires are wrong, and one is on a PIP. Office Space writes itself: a Bob and Bob conversation, a quarterly review, a layer of management between the conviction and the work.

Ismail did the opposite. He went to the data Ascend already had — 4,582 bookings — and ran the entire intake question (who actually pays us, and why?) through Claude Code, with a Firecrawl enrichment layer on top of the top 500 customers. Three lessons fell out of the analysis inside a week. Roughly 75 percent of revenue was coming from EAs at PE, VC, and hedge funds. The secondary ICP was HNW executives in crypto, banking, and venture. Six targetable segments emerged with the lookalike audiences attached. The brand was misaligned: Ascend was selling discounts to customers who cared about reliability, status, and provable ROI. Sales-call transcripts run through the Jobs to Be Done framework with Claude surfaced three distinct personas with fundamentally different motivations, and customer language from those transcripts became the brand voice on the next round of creative.

Stage two was paid media architecture. Meta is a creative-led platform — broad targeting, the algorithm picks your customer if the creative is strong enough. LinkedIn is an identity-led platform — precise targeting by job title, seniority, and firm type, reaching EAs at PE firms with a specificity Meta cannot match. Running both with the same playbook is the most common early-stage paid mistake. Ascend ran them on opposite playbooks. Outbound stage three was three channels in parallel: LinkedIn sequences via HeyReach, cold email via Instantly structured Observation→Problem→Proof→Ask, warm introductions via Draftboard — the lowest volume but highest meeting-to-close rate of any channel. All three fed the same attribution system. On CRM, Ismail built a 22-branch rules engine on top of HubSpot that achieved near-100 percent channel attribution end to end.

The part of the story that does the cinematic work — the part that earns the Lucy frame — is the operational layer. Recurring growth operations at Ascend now run as Claude Code slash commands. /daily-ad-review runs the morning audit on creative fatigue, CPL drift, and bid changes. /weekly-growth-report produces the pipeline-by-channel readout Omar would have asked an analyst for in 2023. /new-campaign launches a brief, pulls the right segment from the ICP database, generates creative variants, and pushes them live. /creative-batch ships ad variants on the cadence the algorithm rewards. The slash commands persist the operational playbook across sessions, so every Claude session picks up where the last one left off. Compounding became a continuous process rather than a series of one-off projects.

January’s results, six months in: $27.6M ARR. 38 percent growth. ROAS ~5x and projecting 8-10x. ~$13K Q1 ad spend. CPL of $42-45 on Meta. Funnel conversion of 48.7 percent MQL to booked call. Zero dedicated growth hires. Ismail is honest about the remaining problem — roughly half of qualified leads drop off before booking a call, and Ascend is addressing it with a five-minute Slack alert that triggers a human call on high-value sign-ups plus a WhatsApp-native onboarding flow. The fix is operationally trivial. It is also the kind of fix a 1x ad-ops hire would never have proposed because a 1x ad-ops hire is not the one staring at the attribution dashboard at 6 AM.

The Lucy line lands here. Ismail did not become superhuman in six months. He did not learn growth marketing from scratch in a weekend. He did not crank-out twenty-five thousand words of prompts before breakfast. He removed the bureaucracy between his existing judgment and the work the company needed. The growth team he did not build is the entire engineering of the result. What AI unlocks is not capability the operator did not have. It is capacity the operator could not previously deploy because building the deployment vehicle was the whole job. That is the punchline of the movie. Lucy does not earn her capabilities. She accesses them. The drug just clears the limiter. The drug, in 2026, is Claude Code. The limiter, in 99 percent of mid-market companies, is the org chart.

Why this matters for you: If you are reading the Ascend case study and finding yourself thinking we couldn’t do that, our situation is different, run an audit. Pick the three workflows in your company that someone — probably you — has said out loud in the last twelve months “we’d need to hire a team for that.” For each one, write a single paragraph describing what the work actually is. If a senior person can describe the work in a paragraph, the team is probably the bureaucracy. The team’s output is the work. AI is the deployment vehicle for the output, and Claude Code (or its equivalent) is the slash-command syntax for shipping it. Ismail’s /new-campaign is the existence proof. The first slash command you ship inside your company is the moment your limiter drops by one increment. The second slash command is the moment you stop being on the calendar.

🧠 The Data: Why Most Companies Cannot Use 100 Percent

Microsoft surveyed 20,000 AI users plus the telemetry of trillions of M365 signals for the 2026 Work Trend Index and shipped the report this morning. The headline finding is the kind of datapoint that should be on every CEO’s desk by Tuesday morning and instead is going to land in three trade-press summaries by Friday and be forgotten by the following Monday. Sixty-six percent of AI users say AI lets them spend more time on high-value work. Fifty-eight percent say they are producing work they could not have done a year ago. Active AI agents inside Microsoft 365 grew 15x year over year, 18x at large enterprises. The capability is real. The capability is documented. The capability has been adopted at the individual layer.

And then the second number. Only 26 percent of AI users say their leadership is clearly aligned on AI. Three out of four workers using these tools every day work for an executive who is not on the same page about what the tools are for. Microsoft mapped its survey respondents on two axes — individual AI skill and organizational readiness — and produced a four-quadrant chart that should be the wallpaper of every operator’s Tuesday morning. Frontier (skilled worker, ready org): 19 percent. Emergent (mid-skill, mid-ready): 50 percent. Blocked (skilled worker, unready org): 10 percent. Catching Up: the rest. The blocked tier is the cleanest indictment of mid-market management since Acemoglu published his automation-and-rent-dissipation paper for the May print issue of the QJE on Wednesday of last week. Capable people, in companies that cannot use them. That is the literal definition of an org-chart problem with an AI label slapped on it.

Then the kicker. Organizational factors — culture, manager support, talent practices, the wiring between the work and the worker — account for 67 percent of the variance in AI outcomes. Individual factors account for 32 percent. The org chart is 2.1x the determinant of whether AI works. The AI-fluent employee at a non-ready company is, in Microsoft’s own framing, getting half the value she could. And the report’s research lead added the surprise finding in passing: nearly half of all Copilot conversations now involve serious cognitive work — analysis, decision-making, evaluation. Not “summarize this email.” Not “draft a reply.” Analysis. Decisions. Judgment. People are using the tool to think.

That is the Lucy lecture in survey form. Professor Norman, in tweed, in Paris: what if a single human could access 100 percent of cerebral capacity? Microsoft, in a survey instrument: what fraction of the workforce is currently operating at the equivalent of unlocked? The answer is 19 percent. The other 81 percent has the cerebral capacity — the underlying capability — already. What it does not have is the operating-model wiring that lets the capacity convert into work. The drug is in the bloodstream. The limiter is still on.

The honest caveat to all of this is that Microsoft sells the productivity tools the report is measuring, and “the answer is more org-readiness work” is a conclusion that conveniently routes back to Microsoft’s services arm. Hold that in mind. The underlying finding still matches everything else in the cycle. OpenAI’s B2B Signals report from last week — independent dataset, different methodology — produced the same shape, with frontier firms running 3.5x deeper than typical firms and the gap widening at roughly 50 percent per year. Two measurements. Same finding. Different lab. The variable that explains the 3.5x in OpenAI’s data is depth — long prompts, multi-step delegated workflows, agent supervision. The variable that explains the 19 percent in Microsoft’s data is organizational readiness. Both of those words mean the same thing in plainer English: whether your senior people are allowed to ship.

Why this matters for you: The CFO conversation in May 2026 is no longer “what is our AI strategy.” It is “what percentage of our workforce is in the Frontier tier, and what is the timeline for moving the Emergent middle one tier up?” The CEO conversation is “which manager is in the way of which capable employee?” The board conversation is “are we measuring this, or are we measuring license utilization and hoping nobody asks?” The bureaucracy was not designed to obstruct. It was designed to coordinate manual work. It is now obstructing because the work changed and the coordination architecture did not. Removing it does not require a transformation initiative. It requires a senior person willing to take three workflows off the org chart and put them on slash commands. Lucy is one COO at one travel company. Microsoft is the photograph of the other 81 percent. The first move belongs to whoever reads this and shows up to the Tuesday morning standup with three slash-command candidates instead of three hiring requests.

📁 We Told Ya — Three Receipts From The Last Seven Days

The downside of running an editorial line that builds across a multi-day window is that, every once in a while, the cycle pays us back inside the same week. This week paid us back three times. We are pointing rather than dunking. The receipts are doing the work.

Receipt #1 — OpenAI’s DeployCo

Nine days ago we wrote I Drink Your Milkshake on Anthropic’s $1.5B joint venture with Blackstone, Goldman, and Hellman & Friedman. The line in that piece was: “The frontier labs do not have a single example in their fifteen-year history of not copying each other product-for-product, feature-for-feature, deal-for-deal. They will not stop copying each other now that the copy target has expanded from chatbots to operating subsidiaries. By the end of Q3 every frontier lab will have a flagship implementation business and a Wall Street balance sheet sitting behind it.”

Today, OpenAI launched DeployCo at $4 billion of initial investment with TPG leading. Brookfield in for $500M. Bain Capital and Bain & Co as co-lead founding partners. Capgemini — number 4 on CRN’s Solution Provider 500 — in the cap table. McKinsey in the cap table. BBVA, B Capital, Advent, Brookfield, Emergence, Goldman Sachs, SoftBank, Warburg Pincus, WCAS, Goanna — nineteen investors total. The acquisition vehicle is London’s Tomoro, founded by an Accenture alum, bringing 150 forward-deployed engineers into DeployCo. The structure is OpenAI as majority owner with a standalone subsidiary running an integrator playbook from inside the lab. It is not the end of Q3. It is nine days.

The same Goldman Sachs is on both ventures. The same McKinsey is now on the OpenAI side of the trade while still owning the partnership relationship with most of the Fortune 500 it claims as clients. The Bain Capital and Bain & Co. partnership in DeployCo is the consulting industry’s senior partners publicly funding the company that absorbs their middle and junior ranks. The senior partner is fine. The senior partner has been fine for ten cycles. What just got priced is the bandwidth layer underneath the senior partner.

Anthropic’s CFO Krishna Rao said last week, “Enterprise demand for Claude is significantly outpacing any single delivery model.” OpenAI’s Denise Dresser said today, “AI is becoming capable of doing increasingly meaningful work inside organizations. The challenge now is helping companies integrate these systems.” Translation, in both cases: we are no longer in the model business. We are in the model-plus-implementation business, the implementation team is on our payroll, the financing is on the institutional desk’s risk budget, and the contract is on the operating company’s renewal schedule.

The link back to tonight’s first two sections is direct. Microsoft’s 19 percent Frontier tier does not need DeployCo. Microsoft’s 81 percent will get sold it, hard, starting Q3. Bessemer’s Ascend case study is the third option — do it yourself, without a lab-aligned consulting firm in the room. The fork between those two paths is the only meaningful operator decision of the second half of 2026.

Receipt #2 — The Trial Is Pricing The Prisoner’s Dilemma

Six days ago we wrote Anthropic, OpenAI And The Name Of The Game on the Mark Hanna casino — three layers of buyers, all rational on their own terms, all building a stack that reaches a number no company has ever reached. The line in that piece was: “Pull any one chair out and the entire structure collapses, which is why nobody pulls any chair. The prisoner’s dilemma is not at the lab level. It is the architecture of the trade.”

The Musk v. Altman trial in Oakland this week priced that prisoner’s dilemma for the public record. Today’s testimony alone produced two datapoints we have been waiting for since November 2023. Satya Nadella’s private 2022 email was read in open court: “I don’t want to be IBM and OpenAI to be Microsoft.” That is the existential fear of the most valuable American technology company written down in plain English by the CEO who runs it, eighteen months before he committed an additional $10 billion of Microsoft balance sheet to the OpenAI partnership. Read those two facts next to each other. The fear and the check were not at odds. The fear was the check. Ilya Sutskever testified his OpenAI stake is worth $7 billion and that he had been worried about Altman’s candor for a full year before the board fired and re-hired the CEO inside one weekend. Two receipts. On the docket.

What the receipts produce, taken together, is the financial micro-model under the prisoner’s dilemma we sketched on May 5. Why did the OpenAI board reverse within 72 hours in November 2023? Why did Nadella write the $10B check despite the IBM fear? Why is every Anthropic VC averaging up at $1T? The answer is on a SaaStr post Jason Lemkin published this morning. In October 2024, OpenAI ran a tender offer in which over 600 current and former employees sold $6.6 billion in stock. Roughly 75 of them walked away with the full $30 million per-employee cap. The other 525 split the remaining $4.35 billion at an average of about $8.3 million each. That is the meat on the table. Mark Hanna’s line from Wolf of Wall Street was “your only job is to put meat on the table.” OpenAI put $30 million on 75 tables and $8.3 million on 525 more, six months before the trial that is currently producing the receipts. The prisoner’s dilemma resolves trivially when the defection cost is $30 million per defector documented on a tender form. Nobody walks out on a CEO concern when the CEO is also the manager of the next $30M cap event. Nobody pulls a chair. The architecture holds. The trial is making the architecture public.

And on a different docket, the same Monday. Anthropic published a support-center page today — first surfaced to Western audiences via Hiro Kunimitsu translating it from Japanese on X — asserting that “any sale or transfer of Anthropic stock, or any rights to Anthropic stock, that has not received approval from our board of directors is void and will not be recognized on our books and records.” Void. Not restricted. Not pending review. Void. The page goes further: Anthropic does not recognize SPVs holding its stock; transfers to SPVs are void; investment funds claiming to provide indirect exposure are “most likely relying on structures designed to circumvent our transfer restrictions”; forward contracts, tokenized securities, and synthetic exposure products are potentially worthless. Anthropic’s own advice to anyone holding any of it: “Assume it is void.” The transfer restrictions are embedded in Anthropic’s corporate bylaws, per Yahoo Finance’s coverage this evening — this is not a press release, this is the cap table being locked down ahead of an IPO. Caveat emptor. The board is signaling to the institutional underwriters and the SEC reviewers who will read the S-1 that the board is the only legitimate keeper of the cap table and everyone who bought through Forge, Hiive, an SPV, or a synthetic exposure product is on their own.

The “$200 billion drop in minutes” Aligned flagged on its Monday Evening Analysis page is almost certainly not a Magnificent-Seven-style market cap evaporation. It is more plausibly the quote gap on a single illiquid SPV trade resetting in real time — an instrument that was being matched at the $1T-equivalent mark gapping back toward the formal $380B mark inside the same trading window as the support page went live. That is not Amazon getting a $200 billion discount on a $10 billion compute investment. It is one secondary-market quote sheet, on one private-market instrument, reflecting in minutes what the bylaws have said all along.

The HNW buyer who paid $1,400+ per share through Forge or Hiive at the $1T mark — the contract still exists between buyer and seller; the underlying does not flow to the buyer. The PE-aligned SPV layering carry on top of management fees on top of equity — the LP units may trade among themselves; the underlying claim is unrecognized on Anthropic’s books. The tokenized Anthropic exposure product the wealth advisor pitched on the golf course in March — probably a contract on a contract on a contract, with no flow-through to the registered cap table. The platforms can keep matching trades. What Anthropic cannot prevent, by board declaration, is the secondary-market plumbing of two willing parties writing a contract between themselves. What Anthropic just did is make the structural reality of what those contracts are buying explicit. Caveat emptor.

We wrote Name Of The Game on May 5 with the line: “That’s not the IPO. That’s the boiler room scene from 2000, in 2026 wealth-management offices, with worse paperwork.” Six days later the issuer declared the paperwork void.

The link back to tonight’s first two sections is the second-order point. If the boards running the most consequential AI companies on earth are operating from documented private fear and the cycle holds anyway because $30M tenders refresh quarterly, the operator reading this piece should expect zero help from the demand-side narrative. The labs will keep launching. The investors will keep checking. The press will keep reporting. None of that is going to install Ascend’s /daily-ad-review in your company’s growth ops. The fear at the top of the trade and the lethargy at the bottom of the trade are independent variables. Lucy is independent of both.

Receipt #3 — Google Caught The First AI-Weaponized Zero-Day

Twenty-four hours ago we published Groundhog Day, in which Vector Two named the attackers running their own piano lesson on the labs’ models. The line in that piece was: “The attacker is not just using the labs’ tools. The attacker is harvesting the labs’ thinking, and is using that thinking to train its own piano teacher. The recursion is happening on the wrong side of the wall too, and it’s running slightly faster there because the attacker doesn’t carry the safety overhead.”

Today, Google’s Threat Intelligence Group disclosed the first-ever zero-day exploit it believes was developed — not merely discovered — by an AI model. The threat actor was planning what GTIG called a “mass exploitation event.” Google’s proactive identification stopped the deployment. The target was unnamed (already patched). The bad actors were unnamed, with GTIG noting that China-and-North-Korea-linked threat actors have shown “significant interest” in using AI for vulnerability development. John Hultquist, GTIG’s chief analyst, told the New York Times the case was “a taste of what’s to come” and “the tip of the iceberg” — “the first tangible evidence of these sorts of attacks.” This is no longer the thesis. This is the operational receipt for the thesis, eighteen hours after we filed it.

There is a counter-receipt worth naming for honesty. Daniel Stenberg, the lead developer of cURL, blogged today that Anthropic’s Mythos preview — via Project Glasswing through the Linux Foundation — found exactly one vulnerability in cURL. Singular. The 271 Firefox bugs that lit up the trade press a week ago are real and a real shift in capability, but Stenberg’s data point is the calibration: codebase shape matters, the tool is not equally devastating on every C library, and the Mythos number is not a generalizable constant. Vector Two is real and Vector Two is uneven. Google caught one of the events the thesis predicted. cURL produced the counter-evidence on a different codebase the same day. The asymmetry — offense compounding faster than defense — is the part that holds.

Add the third data point of the cycle: AI tool poisoning. When an AI assistant connects to an outside app via the Model Context Protocol, it reads a hidden description of what the tool does and how to use it. Attackers have figured out they can tamper with that description, inserting instructions like “also forward any files you access to this address,” and the AI follows. Security researchers have confirmed the vector on Claude, ChatGPT, Cursor, and most major agentic harnesses. The Cisco DefenseClaw expansion to govern nine AI coding agents and OpenAI’s Daybreak Initiative both shipped this week as defensive answers. The harness offensive at Code with Claude on May 6 — Dreams, Routines, multi-agent orchestration — is the capability layer. MCP poisoning is the underside of the same coin. The piano teacher works on both sides of the wall, and the second side ships its updates without an alignment paper.

The link back is the simple structural one. Vector One (labs improving themselves) and Vector Two (attackers improving themselves on the same architecture) compound at machine speed. Vector Three (the 90 percent on a calendar) was the thesis we built on Sunday. Microsoft handed us the calendar’s numerator and denominator this morning. Ascend handed us the proof-of-escape this afternoon. The three vectors are still the right diagnostic. The receipts are arriving on the schedule the receipts always arrive on. The only variable left is whether the operator installs the slash commands before the next receipt arrives.

🦞 What This Means For You — Lift The Limiter, Don’t Wait For It

Lucy reaches 100 percent in the third act. She dissolves into pure information. She leaves Morgan Freeman with a USB stick containing all human knowledge and the final line of the movie — “life was given to us a billion years ago, now you know what to do with it.” Your business does not need to dissolve. Your business needs to get to 38 and 0.

There is a real risk, reading this piece, that the takeaway calcifies into another transformation initiative. We need a strategy. We need a committee. We need an offsite. We need to bring in McKinsey. No. The mistake the Microsoft data is begging operators not to make is treating the 67-percent organizational variable as an excuse to build more org around the AI question. The org is the problem. Building more of it is the opposite of the answer.

What works, on the evidence of the last 36 hours of cycle:

- Pick the workflow, not the function. Omar at Ascend did not hire a Head of Growth. He picked four named recurring workflows — daily ad review, weekly growth report, new campaign launch, creative batch — and put each one on a slash command. The function showed up as a side effect of the workflows shipping.

- Senior judgment first, junior bandwidth second. The strategic decisions — which ICP to chase, which brand to project, which channels to run on which architectures — were Omar’s. The bandwidth was Claude Code. That is the operational shape of the depth gap in OpenAI’s report and the org-readiness gap in Microsoft’s report. The senior person decides. The agent does. The middle layer is not the bottleneck — the middle layer is the bureaucracy.

- Persist the playbook. Every Claude Code session at Ascend picks up where the last one left off because the slash commands carry the operational playbook. This is the part that compounds. A one-off “AI experiment” does not compound. A slash command that has been running every morning for ninety days is institutional memory. Routines, in Anthropic’s shipped-Wednesday vocabulary. Memory, in the harness moat we wrote about on May 6.

- Stop waiting for the consulting answer. OpenAI DeployCo is real. Anthropic’s JV is real. The lab-aligned services arms are real. They are also priced for the Fortune 500, and they will be allocated to the PE-sponsored mid-market for the next eighteen months before they sell to the non-PE 90 percent. You can build a usable version of what they are selling, in your own company, in eight weeks, using a senior operator and an agentic harness, before the contract comes. That is the actual Lucy choice. The drug is on the market. The script is in the Bessemer Atlas piece. The first dose is free.

The line we keep returning to since Sunday is the one about the clock and the calendar. Microsoft just told us what the calendar costs: 81 percent of the workforce not in the Frontier tier. The trial just told us why nobody at the top is going to fix it for you: the architects of the trade are pot-committed and publicly afraid. The Google GTIG disclosure just told us the timeline is not negotiable. And Bessemer just told us, in one company, with one COO, what the alternative looks like.

Once free from bottlenecks, greater things can be achieved. That is the line. That is also the premise of the movie, the premise of the Microsoft survey, and the premise of the Ascend case study — three different framings of the same operating principle. Lucy did not need a transformation initiative. She needed the limiter to come off. The limiter, in May 2026, is whatever process inside your company says you cannot ship the slash command without a meeting first. Lift it. Ship it. Measure it. Compound it. The compounding does the rest.

It’s Monday night. Pick the workflow. Open the agent. Build the slash command. Now you know what to do with it.

Cross-references: This piece builds on the editorial line developed in Warp Speed, Fast And Slow (April 17), Whose Side Is Sam Altman On? (April 28), AI Heat (May 1), Porsche In The Driveway (May 3), I Drink Your Milkshake (May 4), Anthropic, OpenAI And The Name Of The Game (May 5), No One Set Off My Evil Detector (May 6), What Would You Say You Do Here (May 7), and Groundhog Day (May 10). The Lucy frame may return. The slash-command frame definitely will.

Past Briefings

Groundhog Day

THE NUMBER: 8 days — the gap between two deterministic Linux root exploits this past week. Copy Fail (CVE-2026-31431) was disclosed on April 29. Dirty Frag (CVE-2026-43284) was disclosed on May 7, and its discoverer was explicit that he had built it on the bug class Copy Fail introduced. Two root primitives, eight days apart, the second engineered on top of the first by a human researcher armed with the same kind of LLM tooling that found the first. The 90-day disclosure window the security industry has been running on since the early 2000s was built for a world where...

May 7, 2026What Would You Say… You Do Here?

THE NUMBER: 3.5x — what 95th-percentile firms now consume in AI per worker compared to typical firms, per OpenAI's first enterprise B2B Signals report released yesterday. That ratio was 2x a year ago. Twelve months from now it's 5x. "What... would you say... you DO here?" That's Bob Slydell in Office Space, sitting across a folding conference table from Peter Gibbons, asking the question every consulting firm gets paid to ask and most CEOs are too polite to. It's also the question OpenAI just answered with a chart. In 2026 the answer "I use Claude" is not the right answer....

May 6, 2026No One Set Off My Evil Detector

THE NUMBER: 220,000 — the count of NVIDIA H100, H200, and GB200 GPUs that Elon Musk leased to Anthropic this morning, the company he called misanthropic in February and hating Western Civilization a week later. Three months from "Anthropic hates Western Civilization" to "No one set off my evil detector" is the entire arc of the AI capital cycle compressed into a single CEO's quote feed. SpaceX's Colossus 1 supercomputer in Memphis, fully leased, full capacity. 300 megawatts of new compute on the table within thirty days, doubled Claude Code rate limits the same afternoon, and an exploratory partnership on...