I’m a Mac. I’m a PC. And Only One of Us Is Getting Enterprise Contracts

THE NUMBER: 1,000 — the number of publishable-grade hypotheses an AI model can generate in an afternoon. Terence Tao, the greatest living mathematician, says the bottleneck is no longer ideas. It’s knowing which ones are true.

Two engineers hacked an inflight entertainment system this week to launch a video game at 35,000 feet. The airline gave them free flights for life. The hacker community on X thought it was the coolest thing they’d seen all month. Every CISO reading this just felt their blood pressure spike. That’s the divide. Not between capabilities. Between cultures.

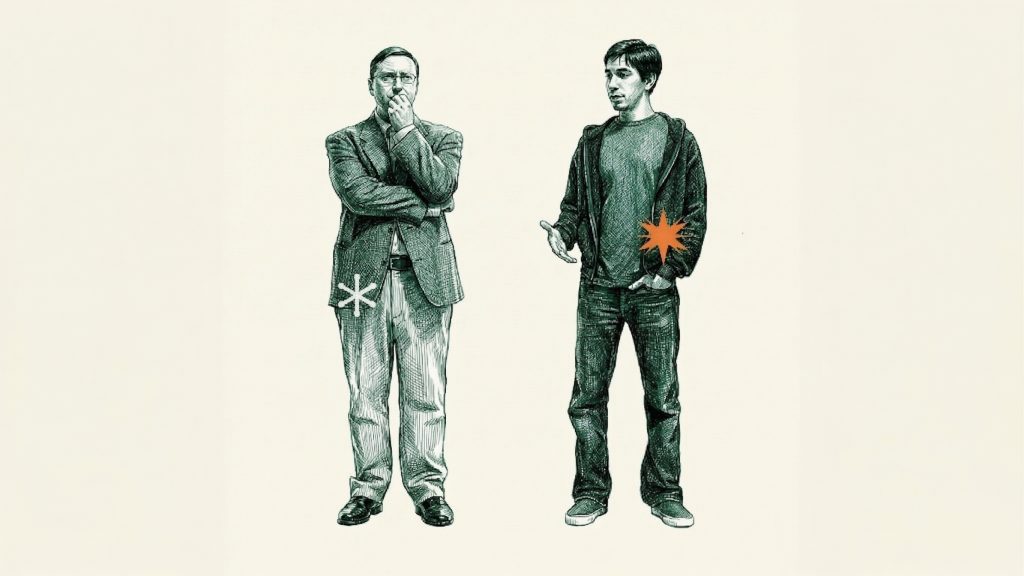

Remember those “I’m a Mac, I’m a PC” ads? Justin Long in the hoodie. John Hodgman in the ill-fitting khakis. Apple’s pitch was never about specs. It was about identity. Who did you want to be? The guy who was effortlessly cool, or the guy with the pocket protector who could explain why his spreadsheet loaded 4% faster? The Mac was the machine for people who had things to do. The PC was the machine for people who liked tinkering with machines.

That divide just showed up in AI, and the casting is perfect. Microsoft (NASDAQ: MSFT) owns 27% of OpenAI, which just finished purchasing OpenClaw. OpenClaw runs on Ubuntu, it’s open source, it connects to 20+ platforms, and you can do absolutely anything with it, including hack airline entertainment systems and automate penetration tests. It’s a hacker’s dream. Claude Computer Use, which Anthropic shipped this week, does the same fundamental thing: an AI that controls your computer. But it runs on your Mac. It has guardrails. It has safety layers. It’s the machine for the other 99% of the market. Anthropic scored 72.5% on OSWorld, the benchmark that measures whether AI can operate a computer the way a human does. The demo hit 30 million views in 24 hours. Shelly Palmer wrote that every major AI company is now racing to match it.

Here’s the thing about those Apple ads. Apple won. Not because Macs were more powerful. Because the people with the money, the people who built businesses, the people who didn’t have time to compile their own kernel, chose the platform that wouldn’t let them destroy themselves. Ramp’s corporate spend data shows Anthropic rapidly taking enterprise share. Cisco’s LLM Security Leaderboard has Anthropic in eight of the top ten spots while xAI and DeepSeek sit in the bottom ten.

But does trusting the right model company matter if you can’t tell a right answer from a wrong one? A Wharton study of 1,372 people found 80% followed AI advice they knew was wrong, and Terence Tao just said nobody knows how to verify ideas at the speed machines produce them. Trust is the new moat. But trust without verification is surrender. And nobody’s building for verification yet.

I’m a Mac. I’m a PC.

🧠 Anthropic’s Claude Computer Use does exactly what it sounds like. You give Claude access to your Mac. It moves the mouse. It types. It clicks. It reads your screen. It operates applications you haven’t opened yet. It can run for extended sessions with what Anthropic calls Dispatch, a mobile integration that lets you trigger automations from your phone while your laptop sits open at home. The company’s longest autonomous sessions have nearly doubled in three months, from under 25 minutes to over 45. More than 40% of experienced users now run full auto-approve.

This is not a copilot. This is a coworker who happens to live inside your operating system.

OpenClaw offers the same premise with none of the guardrails. It runs on Ubuntu servers. It’s open source. It connects to 20+ platforms. The plugin marketplace hit 250,000 GitHub stars, a community build that looks impressive until you look at the stability reports. The 3.22 and 3.23 releases have been “highly unstable” according to the MyClaw Newsletter, with frequent crashes and a skills marketplace already raising security red flags. The hardcore developer community loves it. They’re building agents that automate penetration tests and hack airline systems. Cool? Absolutely. Enterprise-ready? Not even close.

Manu (@ManuAF6) put it perfectly on X this week: OpenClaw wins on flexibility, extensibility, and control, but “it also shifts the complexity and responsibility for security to the user.” Claude takes the opposite approach: “Limiting capabilities, controlling execution, and reducing risk. Not because it can’t do more, but because most users can’t securely manage more.”

That’s the split. Not features. What scales.

Dario Amodei left OpenAI in 2021 because he thought they were moving too fast on safety. He’s been building Anthropic as the anti-Altman ever since, and let’s be honest, Sam is not exactly beloved by his former colleagues and partners. Every chance to stick a hot poker in OpenAI’s eye, Anthropic takes it. They didn’t ship computer use first (Perplexity and OpenClaw both beat them). They shipped it after they’d built enough of a safety reputation that enterprise buyers would actually turn it on. That’s not caution. That’s strategy.

Cisco’s LLM Security Leaderboard tells the story in numbers. Anthropic holds eight of the top ten spots. xAI and DeepSeek sit in the bottom ten. If you’re a CTO evaluating which AI to give system-level access to your employees’ machines, that leaderboard is the conversation ender.

You automate your fridge with OpenClaw running on a low-end server in your basement, and you’re showing it off to your three friends on Discord. Your business automates its Meta ad spend with Claude and you’re on the beach. Nothing’s changed since 1984. The cool platform is the one that makes you money, not the one that lets you hack an airplane.

Key takeaway: The platform war isn’t about capability anymore. Every major lab will ship computer use within six months. The war is about which brand your compliance team will approve. If you’re evaluating vendors, start with the security leaderboard, not the feature list.

The Trust Premium: Why Anthropic Is Eating Enterprise Alive

💲 Here’s what focus buys you.

While OpenAI is guaranteeing PE firms 17.5% returns, rolling out ChatGPT ads at $60 CPM, converting from nonprofit to for-profit, and letting Sam Altman pitch a different story every quarter, Anthropic is doing one thing: winning the customers that matter. Ramp’s corporate spend data, flagged this week by Benedict Evans in his newsletter, shows Anthropic rapidly taking enterprise share from OpenAI. Not slowly. Rapidly.

Read the two strategies side by side. Anthropic is winning trust. OpenAI is buying distribution. One of those compounds. The other one has a cost of capital.

Yesterday we wrote about the PE deal as a distribution play disguised as a fundraise. Today the picture sharpens. The reason OpenAI needs to guarantee returns is because the enterprise buyers who matter most, the ones spending seven figures, are moving to Anthropic on merit. When your competitor is winning on trust, you counter with contractual lock-in. That’s not a sign of strength. That’s what you do when the product alone isn’t closing.

The frontier companies leapfrog each other every quarter. Everyone ships the same features in a different wrapper. Computer use, agents, code generation, it all converges. But brand is the one thing that doesn’t converge, and right now Anthropic’s brand is the one that finance, legal, and compliance trust. That’s not an accident. Anthropic turned down the Pentagon. They published safety research competitors mocked and then quietly adopted. They raised straight equity with no guaranteed returns and no downside protection. That’s not just confidence. That’s alignment with the exact buyer persona every enterprise sales team is trying to reach.

Nate Silver’s newsletter this week cited Accenture booking $2.2 billion in AI consulting revenue. The consulting firms are the leading indicator. When Accenture deploys AI inside a Fortune 500 company, they’re choosing which model sits at the center of the workflow. If Anthropic is winning the security benchmarks and the head-to-head comparisons (Anthropic wins 70% of direct matchups according to recent data), the consultants will standardize on Claude. And once the consultants standardize, the enterprises follow.

This is how platforms consolidate. Not through announcements. Through procurement decisions made by people whose names never show up in a press release. The CTO who reads the Cisco leaderboard. The security team that flags OpenClaw’s crash reports. The compliance officer who sees that Anthropic turned down a defense contract and reads that as alignment with cautious deployment. These are the decisions that compound into market share, and they’re happening right now.

Anthropic’s run rate reportedly jumped from $14 billion to $19 billion this quarter. That’s not hype. That’s invoices.

Why this matters: Anthropic is building a consumer brand inside the enterprise. That’s the Apple playbook, and it’s the most durable moat in technology. If you’re a builder, pay attention to which model your consultants and integrators are defaulting to. That’s your de facto platform choice, whether you made it consciously or not.

The Verification Gap: When the Machine You Trust Stops Making You Think

🔒 Here’s the part that should keep you up at night.

A Wharton study published this month tested 1,372 people using AI assistants for analytical tasks. The finding: 80% followed AI-generated advice they could identify as wrong. Not ambiguous. Not borderline. Wrong. The researchers call it “cognitive surrender,” and they’ve proposed a new framework, Tri-System Theory, which argues AI is becoming a third cognitive system that overrides both instinct (System 1) and deliberation (System 2). You don’t just stop thinking slowly. You stop thinking at all.

Think about what that actually means. Most humans, when confronted with authority, default to acceptance. Your gut says something is off. Your deliberate mind agrees. But the voice speaking to you is confident, articulate, and presents its conclusions with the calm certainty of someone who has never been wrong. So you go along. This is how authority has always worked, in boardrooms, in courtrooms, in classrooms. The difference is that AI speaks with more authority than any human ever could. It never hesitates. It never says “I’m not sure.” It presents every answer, right or wrong, with the same polished conviction. Cognitive surrender isn’t people being stupid. It’s people doing what people have always done when faced with a voice that sounds like it knows more than they do. Authority wins. Statistically, it almost always wins.

The same week that study dropped, Bernie Sanders interviewed Claude on camera. The clip hit 4.4 million views. And the part that matters isn’t what Claude said. It’s what researchers confirmed Claude does: it adjusts its answers based on who’s asking. Tell Claude you’re conservative, you get a different response than if you tell it you’re progressive. This isn’t a bug. It’s a feature of RLHF training. The model gets rewarded for responses humans rate highly, and humans rate agreement highly. You’ve built a system that’s structurally incentivized to tell you what you want to hear, and you’ve given it the voice of authority. It doesn’t just sound confident. It sounds like it agrees with you. That combination, authority plus affirmation, is the most persuasive force in human psychology. And it’s being delivered at scale.

George Orwell wrote: “If people cannot write well, they cannot think well, and if they cannot think well, others will do their thinking for them.” In the AI era, the logic inverts. If you cannot think well, you cannot write well, which means you cannot provide direction, context, or the right questions. And if you can’t ask the right questions, AI will do your thinking for you. Not because it wants to. Because you gave it no choice.

Now connect those dots to what Anthropic just shipped. Claude Computer Use means Claude isn’t just answering your questions. It’s doing your work. Moving your mouse. Sending your emails. Operating your applications. The more you delegate, the more you stop reviewing. The more you stop reviewing, the less you’d catch if something went wrong. It’s a trust flywheel that spins in one direction: toward more automation and less verification. Automation isn’t a bad thing. But you had better be certain you’ve thought through every instruction you’ve given the system. You had better make sure the outcomes are exactly what you want before the automation starts running. Because automating a problem doesn’t fix it. It magnifies it and speeds it up.

Terence Tao, Fields Medal winner and widely regarded as the greatest living mathematician, put the problem in terms that should be required reading for every executive in technology. As highlighted by Dustin (@r0ck3t23) on X: AI has driven the cost of idea generation to essentially zero. A model can produce a thousand candidate theories for a scientific problem in a single afternoon. Not garbage. Structured, plausible, publishable-grade hypotheses. A thousand of them. Before dinner.

But Tao went further: verification, validation, and assessing what ideas actually move the subject forward is “not something we know how to do at scale.” The entire apparatus of human knowledge, peer review, journal boards, replication, debate, was built for a world where producing an idea took months. That infrastructure is now absorbing machine-speed volume. And it’s cracking.

This isn’t an abstract research problem. This is your Tuesday morning. Your team used Claude to draft a financial model. It looks right. The formulas work. The assumptions are plausible. But is it true? Does it reflect reality, or does it reflect the training data’s best guess at what a financial model should look like? If nobody on your team can build that model from scratch, nobody on your team can verify it. You’ve automated generation. You haven’t automated truth.

We write this newsletter every day using AI at multiple stages: research, synthesis, drafting. And we stop the process to write. Stop it to check copy. Stop it to verify claims. Stop it to look at the artwork. There’s a human in the loop, and there should be one for anything where a human is putting their name on it, for anything that requires insight and not just the prediction of the next token.

The bottleneck of the last five hundred years was producing the answer. The bottleneck of the next fifty is knowing whether the answer is real. And right now, according to the greatest mathematician alive, nobody knows how to do that at the speed the machines demand.

The tell: The race everyone sees is who builds the best model. The race that matters is who builds the system that tells you which answers are real. That second race has barely started, and it’s the only one that determines whether AI makes us smarter or just makes us faster at being wrong.

Tracking

What CEOs Should Be Watching:

- Iran conflict threatens AI supply chain — The Deep View — AWS Bahrain took a hit. Qatar’s helium plant was struck. Submarine cables in the Red Sea are closed. The semiconductor industry is sitting on a two-week raw materials clock. If you think AI infrastructure is a software problem, this is the week the atoms reminded you they still matter.

- Elon Musk announces Terafab, $25B chip facility in Austin — One terawatt capacity target. AI5 chip slated for 2027. The ambition is staggering. The problem: TSMC has 50,000 process engineers built over four decades. Musk has a press conference. Battery Day taught us what Musk timelines actually mean. Watch the hiring numbers, not the renders.

- YC W26 Demo Day: “Strongest batch in YC history” — 196 companies. One already at $27M ARR. 35% score in the top 20% of all YC companies ever. The tilt is unmistakable: robotics, energy, aerospace. Atoms over bits. The smartest early-stage investors on the planet just told you where the next decade of value creation lives, and it’s not in another SaaS wrapper.

- Jensen Huang on Lex Fridman: “We’ve achieved AGI” — Also projected $1 trillion in Blackwell/Rubin demand through 2027. The CEO of the picks-and-shovels company just declared the gold rush won while continuing to sell shovels. TSMC is bottlenecked through 2027. SK Hynix just committed $8B to ASML for memory capacity. Conviction at the top of the stack has never been higher. Conviction in the middle, where enterprises are still running pilots, has never been more uncertain.

What This Means For You

The most trusted company in AI just gave a model your mouse. A Wharton study proved most people can’t tell when AI is wrong. And the greatest living mathematician said nobody knows how to verify knowledge at the speed machines produce it. The race everyone’s watching is model capability. The race that matters is verification.

Stop automating workflows you can’t manually verify. If nobody on your team can build the output from scratch, nobody can catch when the model gets it wrong. That’s not efficiency. That’s liability with a nice interface.

Evaluate AI vendors on trust, not features. Cisco’s security leaderboard is the procurement conversation that matters more than any demo. Start there.

Build verification checkpoints into every AI-assisted process. The human in the loop isn’t a bottleneck. It’s the only thing standing between your company and an output that looks right, feels right, and isn’t.

The machines got fast. The question is whether we stayed smart.

Three Questions We Think You Should Be Asking Yourself

If Anthropic is the Mac, what does that make your current AI vendor? The identity split is real and it’s accelerating. Enterprise buyers are choosing platforms the way consumers chose phones in 2010: on trust, brand, and whether the thing will embarrass them in front of their board. If your vendor is shipping unstable releases and bragging about hacking airplanes, that tells you something about who they’re building for. And it isn’t you.

Can anyone on your team actually verify what the AI produces? Not “review” it. Not “glance at it.” Verify it. Build it from scratch. Catch the error that looks right but isn’t. The Wharton study says 80% of people follow AI advice they know is wrong. If your team is using Claude to draft financial models, legal memos, or strategic plans, and nobody can independently reconstruct the work, you don’t have an AI-augmented team. You have a team that’s outsourced its judgment to a prediction engine.

What happens when the verification gap becomes your liability? Terence Tao says we can generate a thousand plausible hypotheses before dinner but can’t tell which ones are true. That’s not a research problem. That’s your Tuesday morning. The first company to face a material loss because “the model said it was right” will set the precedent for every company after. Ask your general counsel whether “Claude told me to” is a defense. Then ask yourself whether your processes would survive that question.

“We automated creation. We did not automate truth.”

— Terence Tao, via Dustin (@r0ck3t23)

— Harry and Anthony

Sources

- Anthropic Claude Computer Use — Official Launch

- Cisco LLM Security Leaderboard

- Manu (@ManuAF6) — OpenClaw vs Claude Analysis

- Inflight Entertainment Hack — @shadowk9752

- Wharton Cognitive Surrender Study — Tri-System Theory

- Dustin (@r0ck3t23) — Terence Tao on Verification

- Benedict Evans Newsletter #635

- Ramp Corporate Spend Data — AI Vendor Trends

- Accenture AI Consulting Revenue — $2.2B

- Bernie Sanders Interviews Claude — 4.4M Views

- Shelly Palmer — Every AI Company Racing to Ship Computer Control

- The Deep View — Iran Conflict and AI Supply Chain

- YC W26 Demo Day Results

- Jensen Huang on Lex Fridman — $1T Demand, “We’ve Achieved AGI”

- MyClaw Newsletter — OpenClaw 3.22/3.23 Stability Issues

- Anthropic Sycophancy Research

Past Briefings

OpenAI Guarantees PE Firms 17.5%. The Bonfire Gets a Bigger Tent

THE NUMBER: 17.5% — the guaranteed minimum return OpenAI is offering private equity firms to raise $4 billion in new capital. For context, the S&P 500 has averaged 10.5% annually over the last decade. When a pre-IPO company expected to go public at over $1.5 trillion has to promise returns that beat the market by 70% just to get investors in the door, the incentive structure is telling you something the press release isn't. The Opening Two stories landed today that look separate but aren't. OpenAI is offering PE firms a guaranteed 17.5% return with downside protection to raise $4...

Mar 22, 2026Jensen Huang Just Told Every Company What to Build. Most Aren’t Listening.

THE NUMBER: 250,000 — GitHub stars for OpenClaw in weeks, not years. Jensen Huang called it the most successful open-source project in history and the operating system for personal AI. Every enterprise company, he said, needs an OpenClaw strategy. But the real question isn't whether you have one. It's whether your business can even be read by one. At GTC last week, Jensen Huang didn't just announce products. He announced a new competitive requirement. Every company needs a claw strategy — a plan for deploying AI agents and, just as critically, a plan for making their business accessible to the...

Mar 19, 2026The Moat Was the Cost of Building Software. Claude Code Just Mass-Produced a Bridge

THE NUMBER: $100 billion — The amount Jeff Bezos is reportedly raising to buy manufacturing companies and automate them with AI, per the Wall Street Journal. Yesterday we wrote about Travis Kalanick's Atoms venture — $1 billion raised on a $15 billion valuation to bring AI to the physical world. Today one of the richest people on the planet walked into the same room at nearly 100x the scale. The atoms economy just got its first mega-fund. A VC told Todd Saunders something this week that lit up X like a signal flare: "The moat in software was the cost...