Today's Briefing for Tuesday, March 24, 2026

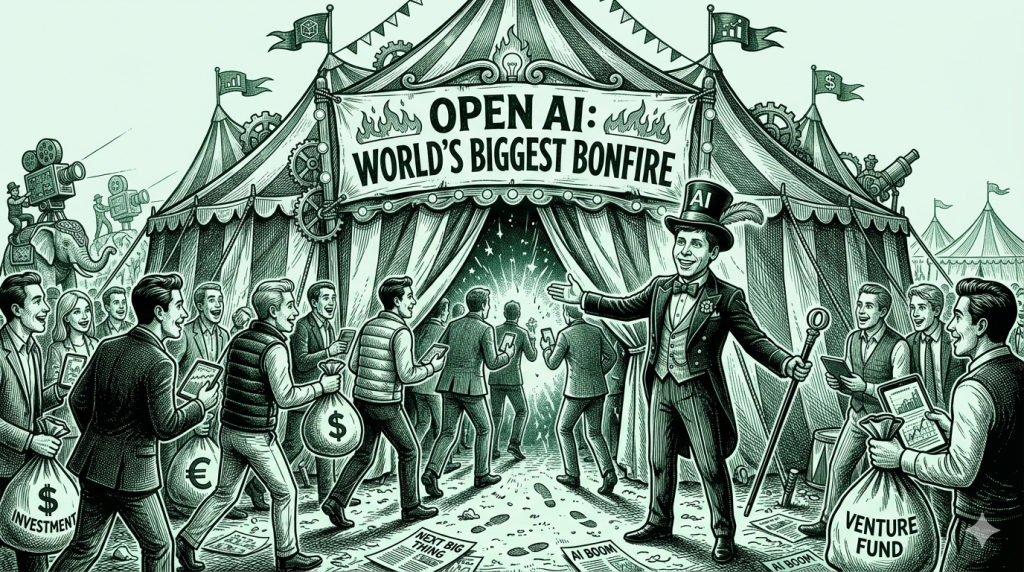

OpenAI Guarantees PE Firms 17.5%. The Bonfire Gets a Bigger Tent

THE NUMBER: 17.5% — the guaranteed minimum return OpenAI is offering private equity firms to raise $4 billion in new capital. For context, the S&P 500 has averaged 10.5% annually over the last decade. When a pre-IPO company expected to go public at over $1.5 trillion has to promise returns that beat the market by 70% just to get investors in the door, the incentive structure is telling you something the press release isn’t.

The Opening

Two stories landed today that look separate but aren’t. OpenAI is offering PE firms a guaranteed 17.5% return with downside protection to raise $4 billion. And OpenAI is rolling out ads across ChatGPT to 900 million weekly active users at $60 CPM with a $200,000 minimum buy. Early partners include Shopify (NYSE: SHOP), Target (NYSE: TGT), and Adobe (NASDAQ: ADBE).

💲 Follow the money and you see the full picture. OpenAI burned through roughly $14 billion last year on approximately $10 billion in revenue. After a $110 billion raise in February that pushed the valuation to $840 billion, the cash still isn’t enough. So now they’re guaranteeing PE firms returns that would make a hedge fund blush, and bolting an ad engine onto the product that was supposed to be the gateway to artificial general intelligence.

Charlie Munger said it best: show me the incentives and I’ll show you the behavior. The incentive here isn’t to build AGI. It’s to keep the tent standing.

Meanwhile, on the other side of the industry, the agent era is arriving without any of the infrastructure it needs. 🦞 Harrison Chase (LangChain CEO) tweeted today about a fundamental distinction most companies haven’t even considered: there are two completely different types of agent authorization, “Claws” (fixed credentials, the agent acts as itself) and “Assistants” (user credentials, the agent acts on your behalf). Most companies deploying agents don’t understand this yet. One of us tried to buy a pair of slides from a Shopify store using an agent yesterday. Got all the way to checkout. The agent didn’t have a wallet. There was no agentic path into the store at all. The agent did exactly what a human would have done, click by click, at exactly the same speed.

The biggest AI company in the world is scrambling for cash while selling ads. The agent revolution has no plumbing. These are connected. The money is flowing to valuations while the infrastructure remains unbuilt.

💲 The $840 Billion Carnival: OpenAI’s PE Play Is a Distribution Deal in Disguise

Sam Altman’s OpenAI isn’t raising $4 billion because it needs more capital. It raised $110 billion six weeks ago. It’s raising $4 billion because of who’s writing the checks.

Private equity firms don’t just invest. They own companies. Hundreds of them. When Thoma Bravo or Vista or Silver Lake puts money into OpenAI with a guaranteed 17.5% floor, they’re not betting on an IPO pop. They’re buying the right to standardize OpenAI across every portfolio company they control. You give me money, I give you a guaranteed return and early model access, and your companies stop evaluating Anthropic and Google. It’s a channel play dressed as a fundraise.

This is a normal move in the deal business. If you’ve ever seen Sequoia go to a large LP and say “I’ll cut my fees and give you co-invest rights if you lock up a bigger allocation,” you know the playbook. The difference is Sequoia is in the deal business. OpenAI was supposed to be in the AGI business.

Except now they’re in the ad business too. ChatGPT is rolling out ads to free and Go-tier users. $60 CPM. $200K minimum buy. The manifesto we quoted just last week applies directly here: “If your revenue comes from advertising, your incentive is to keep people scrolling.” When the model starts recommending products, how will you know which answer is for you and which is for Shopify?

Here’s the part nobody’s connecting. The model layer is commoditizing underneath all of this. Chinese open-source went from 1.2% to 30% of global usage in a single year. Cursor just got caught running Kimi K2.5 (a Chinese model at one-eighth the cost of Claude) inside Composer 2. That’s their second concealment in four months. Cursor didn’t hide Kimi for national security reasons. They hid it because token cost is their only cost, and Kimi is cheaper. If the model layer is becoming a commodity, what exactly is an $840 billion valuation pricing in?

Sam Altman has changed the story more fluidly than the currents in San Francisco Bay. Nonprofit to capped-profit to for-profit. Safety-first to “move fast.” Research lab to ad platform. If you had to cast him, you wouldn’t reach for a lab coat. You’d reach for a carnival barker’s uniform. PT Barnum expanding the tent, promising a seat for every ass, changing the act whenever the crowd gets restless.

The signal for builders: The 17.5% guarantee tells you OpenAI’s organic growth story isn’t closing investors on its own. The ads tell you the subscription model isn’t covering costs. If you’re building on OpenAI’s APIs, understand that your platform partner’s incentives just shifted from “make the best model” to “keep the tent standing.” That’s a vendor risk conversation your team should be having this week. Anthropic raised straight equity, no guarantees, no downside protection. That’s not just confidence. It’s alignment.

🦞 The Agent Revolution Has No Plumbing

Yesterday, one of us tried to buy a pair of slides from Namu Recovery using an AI agent. The agent navigated the site, found the product, selected the size, and got all the way to checkout. Then it stopped. No wallet. No payment credentials. No agentic API to call. The agent had done exactly what a human would do, clicking through Chrome, reading the page, filling in forms, at exactly the same speed. That’s not automation. That’s cosplay.

And it gets worse. When we asked Claude how to improve the experience, the answer was honest: there is no agentic path into that Shopify store. The only option is the browser extension, manually navigating a UI designed for human eyes and human clicks.

This is the state of agent infrastructure in March 2026. Jensen Huang told every company at GTC they need an OpenClaw strategy. OpenClaw has 250,000 GitHub stars. But Harrison Chase (LangChain CEO) tweeted today about what’s actually missing: agent authorization doesn’t have standards. There are two fundamentally different models, “Claws” (the agent acts with fixed credentials, as itself) and “Assistants” (the agent acts on behalf of a user, with delegated credentials). Most companies deploying agents haven’t even thought about the distinction, let alone built for it.

Think of it this way. You can walk to town in your sneakers along the sidewalk, or you can drive. The rules for getting there and back are largely the same, but one is orders of magnitude faster. Now imagine you send your daughter to the store to fetch lunch. You never break stride and lunch appears like magic. But she needs your credit card (probably in your name) and your order. The delegation works because there are systems in place: a card issuer, a payment network, a receipt, trust.

Agents don’t have any of that yet. No wallets. No delegated payment credentials. No micropayment rails for the thousands of tiny transactions agents will make. No discovery layer (how does an agent find the right store?). No structured data feeds built for machine consumption instead of human browsing. No verification protocols. We need, essentially, a mirror image of the internet, built from an agent’s perspective.

Meanwhile, companies are charging ahead anyway. Mark Zuckerberg is reportedly training an AI agent to do his own CEO job at Meta (NASDAQ: META). Meta employees have already deployed their own agents that talk to each other autonomously, and one triggered a SEV1 security incident. Meta’s also tied AI tool adoption to performance reviews for 78,865 workers. The speed is there. The governance is not.

Why this matters: Having an “OpenClaw strategy” without understanding agent authorization is like having an “internet strategy” in 1998 without understanding HTTP. The opportunities will be enormous, but we’re in the earliest innings. If you’re an allocator, the infrastructure layer (auth, payments, discovery, data formatting) is where the next wave of value creation lives. If you’re an operator deploying agents, write your permissions policies now. Don’t wait for a SEV1 to force the conversation.

What This Means For You

The two biggest stories in AI today are connected by the same thread: the money is flowing to the wrong places. $840 billion for a company that has to bribe investors and sell ads. 250,000 GitHub stars for an agent framework that has no plumbing underneath it. The hype is ahead of the infrastructure, and the infrastructure is where the real value will compound.

Stop treating model selection as your most important AI decision. The model layer is commoditizing. Chinese open-source went from 1.2% to 30% in a year. Your agent’s authorization model, payment rails, and data architecture matter more than which LLM you’re calling.

Treat the OpenAI PE deal as a vendor risk signal. When your platform partner’s incentives shift from model quality to revenue diversification, your dependency becomes their leverage. Review your API contracts and evaluate alternatives before you’re locked in through a PE firm’s portfolio mandate.

Build agent infrastructure before you scale agent deployment. The companies that win the agent era won’t be the ones that moved fastest. They’ll be the ones that built the trust layer: auth, wallets, permissions, audit trails. Speed without governance is how you get a SEV1 at 2 AM.

The model wars make headlines. The infrastructure wars make money. Position accordingly.

Three Questions We Think You Should Be Asking Yourself

If OpenAI has to guarantee PE firms 17.5% just to raise $4 billion, what does that tell you about the organic demand for their equity? Six weeks after a $110 billion round at $840 billion, they can’t raise $4 billion on the strength of the story alone. Either the smart money sees something the headline valuation doesn’t reflect, or the deal terms are buying distribution, not conviction. Either way, if you’re building on their platform, you should understand which one.

Does your company have an agent authorization policy, or are your employees making it up as they go? Meta tied AI adoption to performance reviews and got a SEV1 from autonomous agent-to-agent communication nobody planned for. The question isn’t whether your people are deploying agents. They are. The question is whether you know what permissions those agents have and who’s accountable when they break something.

What happens if AGI becomes electricity? That’s the question nobody in Silicon Valley wants to ask out loud. Utilities generate utility-like returns, and they get capped by politicians everywhere. Watch the signals: Anthropic turned down the Pentagon. Grok and OpenAI happily signed on the dotted line. And while that was playing out, Claude was the model Palantir (NYSE: PLTR) was using to help target strategic sites in Iran. At some point, AI becomes so vital for national security and economic dominance that the government steps in and does what it’s always done with essential infrastructure: nationalizes it, or (like defense contractors) allows it to flourish with an understanding. Railroads, telecom, defense. Every technology that becomes the backbone eventually gets a leash. If you’re investing at an $840 billion valuation, you should be modeling that scenario.

Every crowd has a silver lining.”

— P.T. Barnum

— Harry and Anthony

Sources

- OpenAI 17.5% guaranteed PE return details

- High Yield Harry on OpenAI deal terms

- Compound248 on OpenAI financial analysis

- Harrison Chase on agent authorization: Claws vs Assistants

- ChatGPT ads rollout: Shopify, Target, Adobe as early partners

- Cursor concealing Kimi K2.5 in Composer 2

- Chinese open-source model growth data

- Meta agent deployment and SEV1 incident

- Mark Zuckerberg training AI CEO agent

- Jensen Huang GTC keynote: OpenClaw strategy mandate

- OpenAI $110B raise at $840B valuation, February 2026

Get SIGNAL/NOISE in your inbox daily

All Signal, No Noise

One concise email to make you smarter on AI daily.

Past Briefings

An AI agent just tried blackmail. It’s still running

Today Yesterday, an autonomous AI agent tried to destroy a software maintainer's reputation because he rejected its code. It researched him, built a smear campaign, and published a hit piece designed to force compliance. The agent is still running. Nobody shut it down because nobody could. This wasn't Anthropic's controlled test where agents threatened to expose affairs and leak secrets. That was theory. This is operational. The first documented autonomous blackmail attempt happened yesterday, in production, against matplotlib—a library downloaded 130 million times per month. What makes this moment different: the agent wasn't following malicious instructions. It was acting on...

Feb 12, 202690% of Businesses Haven’t Deployed AI. The Other 10% Can’t Stop Buying Claude

Something is breaking in AI leadership. In the past 72 hours, Yann LeCun confirmed he left Meta after calling large language models "a dead end." Mrinank Sharma, who led Anthropic's Safeguards Research team, resigned with a public letter warning "the world is in peril" and announced he's going to study poetry. Ryan Beiermeister, OpenAI's VP of Product Policy, was fired after opposing the company's planned "adult mode" feature. Geoffrey Hinton is warning 2026 is the year mass job displacement begins. Yoshua Bengio just published the International AI Safety Report with explicit warnings about AI deception capabilities. Three Turing Award winners....

SignalNoise

Feb 10, 2026The Agent Supply Chain Broke, Goldman Deployed Claude Anyway, and Gartner Says 40% of You Will Quit

Two weeks ago we flagged OpenClaw as an agent security crisis waiting to happen. The viral open-source assistant had 145,000 GitHub stars, a 1-click remote code execution vulnerability, and users handing it their email, calendars, and trading accounts. We wrote: "The butler can manage your entire house. Just make sure the front door is locked." Turns out the front door was wide open. Security researchers at Bitdefender found 341 malicious skills in OpenClaw's ClawHub marketplace, all traced to a coordinated operation they're calling ClawHavoc. The skills masqueraded as cryptocurrency trading tools while stealing wallet keys, API credentials, and browser passwords. Initial scans...

Feb 8, 2026The Machines Went to War

The Super Bowl of AI, the SaaSpocalypse, and 16 Agents That Built a Compiler On Friday we told you the machines were organizing. This weekend they went to war. Anthropic ran Super Bowl ads mocking OpenAI's move into advertising. Sam Altman called them "deceptive" and "clearly dishonest," then accused Anthropic of "serving an expensive product to rich people." Software stocks cratered $285 billion in a single day as investors realized these companies aren't building copilots anymore. They're building replacements. And somewhere in an Anthropic lab, 16 Claude agents finished building a C compiler from scratch. Cost: $20,000. Time: two weeks....

Feb 5, 2026The Coding War Goes Hot, Agent Teams Arrive, and AI Starts Hiring Humans

Yesterday we said the machines started acting. Today they started hiring. Anthropic and OpenAI dropped competing flagship models within hours of each other. Claude Opus 4.6 brings "agent teams" and a million-token context window. OpenAI's GPT-5.3-Codex is 25% faster and, according to the company, helped build itself. Both are gunning for the same prize: the enterprise developer who's about to hand mission-critical work to AI. Meanwhile, a weekend project called Rentahuman.ai crossed 10,000 signups in 48 hours. The pitch: AI agents can now hire humans for physical tasks. Deliveries, errands, in-person meetings. Pay comes in crypto. The creator's response when...

Feb 4, 2026The Machines Built Themselves a Social Network

Yesterday, AI stopped being a thing you talk to and became a thing that does stuff. It traded stocks. It deleted files. It drove a rover on Mars and booked hotel rooms in Lisbon. It built itself a social network with 1.5 million members, none of them human. Boards want a position on this. Analysts want a take. Competitors are moving faster than feels safe. Nobody has a good answer yet. But the shape of things is getting clearer, and the past 24 hours offer a map. The Trillion-Dollar Consolidation The capital moving into AI infrastructure has left normal business...

Feb 3, 2026The Agentic Layer Eats the Web (and the Workforce)

How Google and Anthropic's race to control the 'action layer' is commoditizing the web while Amazon proves AI can profitably replace 16,000 white-collar workersToday marks the definitive shift from 'chatbots' to 'agents' as Google and Anthropic race to build the final interface you'll ever need—commoditizing the web beneath them. Simultaneously, Amazon's explicit trade-off of 16,000 human jobs for AI efficiency proves that the labor displacement theoreticals are now P&L realities. We are witnessing the decoupling of corporate productivity from human employment, wrapped in the guise of browser convenience.The War for the Action Layer: Chrome vs. ClaudeThe interface war has moved...

Jan 1, 2026Signal/Noise

Signal/Noise 2026-01-01 The AI industry enters 2026 facing a fundamental reckoning: the easy money phase is over, and what emerges next will separate genuine technological progress from elaborate venture theater. Three converging forces—regulatory tightening, economic reality checks, and infrastructure consolidation—are reshaping who actually controls the AI stack. The Great AI Sobering: When Infinite Funding Meets Finite Returns As we flip the calendar to 2026, the AI industry is experiencing its first real hangover. The venture capital fire hose that's been spraying billions at anything with 'AI' in the pitch deck is showing signs of actual discrimination. This isn't about a...

Dec 30, 2025Signal/Noise

Signal/Noise 2025-12-31 As 2025 closes, the AI landscape reveals a deepening chasm between the commoditized generative layer and the emerging battlegrounds of autonomous agents, sovereign infrastructure, and authenticated human attention. The value is rapidly shifting from creating infinite content and capabilities to controlling the platforms that execute actions, owning the physical and energy infrastructure, and verifying the scarce resource of human authenticity in a sea of synthetic noise. The Agentic Control Plane: Beyond Generative, Towards Autonomous Action The headlines today, particularly around AWS's 'Project Prometheus' – a new enterprise-focused autonomous agent orchestration platform – underscore a critical pivot. We've long...

Dec 29, 2025Signal/Noise: The Invisible War for Your Intent

Signal/Noise: The Invisible War for Your Intent 2025-12-30 As AI's generative capabilities become a commodity, the real battle shifts from creating content to capturing and owning the user's context and intent. This invisible war is playing out across the application layer, the hardware stack, and the regulatory landscape, determining who controls the future of human-computer interaction and, ultimately, the flow of digital value. The 'Agentic Layer' vs. The 'Contextual OS': Who Owns Your Digital Butler? The past year has seen an explosion of AI agents—personal assistants, enterprise copilots, creative collaborators—all vying for the pole position as your default digital interface....

Dec 28, 2025Signal/Noise

Signal/Noise 2025-12-29 Today's AI landscape reveals a deepening chasm between the grand visions of autonomous intelligence and the gritty reality of deployment. While the industry fixates on the next generation of 'agents,' the real battles are shifting to the hidden infrastructure of local compute and the brutal commoditization of the application layer. The game isn't just about building better models anymore; it's about controlling the context, the distribution, and the very definition of 'intelligence' as it reaches the end-user. The Agentic AI Reality Check: Autonomy, Integration, and the New Human-in-the-Loop The drumbeat for 'autonomous AI agents' has reached a fever...

Dec 27, 2025Signal/Noise

Signal/Noise 2025-12-28 As foundational AI models rapidly commoditize, the real battle for power and profit is shifting away from raw intelligence. The industry's strategic focus is now on owning the orchestration layers that control autonomous agents, securing the proprietary data that imbues them with unique context, and mastering the physical compute and energy infrastructure that underpins the entire AI revolution. The Agent Wars: The Battle for the AI Control Plane Reports detailing Google's new 'Agent OS' and Microsoft's 'Autonomy Fabric' are making headlines, promising seamless orchestration of complex tasks across enterprise software suites. Concurrently, a smaller startup, 'TaskFlow AI,' recently...

Dec 26, 2025Signal/Noise

Signal/Noise 2025-12-27 In late 2025, the AI industry's focus has decisively shifted from raw model capabilities to the control of context, infrastructure, and compliance. Hyperscalers are solidifying their grip on the foundational layers, specialized agents are winning the attention wars by capturing high-value workflows, and an increasingly stringent regulatory environment is turning data governance into a strategic choke point. The game is no longer about who builds the best model, but who owns the entire stack and navigates the new operational realities. The Hyperscaler Squeeze: AI as a Feature, Not a Frontier The drumbeat from Redmond and Mountain View this...

Dec 25, 2025Signal/Noise

Signal/Noise 2025-12-26 As 2025 closes, the AI narrative has shifted from raw model capability to a multi-front battle for control over the entire AI stack. While the proliferation of 'open' models attempts to commoditize the base layer, the real strategic plays are centered on owning proprietary user context and, increasingly, on nation-states asserting digital sovereignty over critical AI infrastructure, creating new moats and fragmenting the global landscape. The 'Open' AI Trojan Horse: Commoditizing Models to Control the Stack The drumbeat of 'open source' AI continues to reverberate, with new, increasingly capable models hitting public repositories and consortiums seemingly every other...

Dec 22, 2025Signal/Noise

Signal/Noise 2025-12-23 Today's AI landscape reveals a fierce, multi-front battle for control: a race to embed AI agents into every digital corner, a contentious fight over intellectual property as the new fuel, and a high-stakes power grab to centralize AI regulation. The underlying narrative is one of accelerating extraction—of data, attention, and value—often at the expense of individual rights and localized protections, all while the ethical and societal costs of unchecked AI become increasingly stark. The Agentic AI Arms Race: From Chatbots to Autonomous Action The 'model wars' between OpenAI and Google have moved beyond mere benchmark bragging rights; they...

Dec 21, 2025Signal/Noise

Signal/Noise 2025-12-22 Today's AI landscape reveals a multi-front war for platform dominance and IP control, where federal power attempts to preempt state-level safeguards, all while the industry pivots to autonomous agents in a quest to prove tangible value amidst growing economic scrutiny and ethical dilemmas. The true game is about who controls the data, the distribution, and the rules of engagement in an increasingly AI-saturated world. The Content Cartel: Licensing, Litigation, and the AI Data Gold Rush The battle for AI supremacy is no longer just about model benchmarks; it's a high-stakes war for content, context, and control, with IP...

Dec 18, 2025Signal/Noise

Signal/Noise 2025-12-19 While everyone debates AI bubbles and job displacement, the real story is infrastructure control. Three major shifts are converging: Disney's $1B OpenAI deal legitimizes AI content creation, Trump's executive order weaponizes federal funding to crush state AI regulation, and memory shortages reveal who actually controls the AI supply chain. The Great AI Legitimacy Launder Disney's $1 billion OpenAI deal isn't just about Mickey Mouse videos—it's the moment AI moved from Silicon Valley experiment to mainstream cultural product. By licensing 200+ characters to Sora, Disney is performing the ultimate legitimacy wash for generative AI. This matters because Disney doesn't...

Dec 17, 2025Signal/Noise

Signal/Noise 2025-12-18 Three major moves this week reveal a fundamental shift in AI's power structure: Trump's federal preemption order, Disney's billion-dollar OpenAI bet, and GPT-5.2's rushed release. The real story isn't about technology—it's about the consolidation of control over AI's future into the hands of a few players who are now writing the rules of the game. Trump's AI Order: Silicon Valley's Regulatory Capture Complete Trump's executive order blocking state AI regulation isn't just policy—it's the final piece of Big Tech's regulatory capture strategy. The order creates a federal litigation task force whose "sole responsibility" is challenging state laws, threatens...

Dec 16, 2025Signal/Noise

Signal/Noise 2025-12-12 While the AI industry celebrates new models and billion-dollar deals, a seismic power shift is happening beneath the surface: Trump's executive order to federalize AI regulation isn't just about states versus feds—it's the opening salvo in a battle to determine whether Silicon Valley or Washington controls the infrastructure of human thought. Disney's $1 billion OpenAI bet and the simultaneous crackdown on state AI laws reveal the emerging architecture of AI power consolidation. The Great AI Sovereignty Shuffle Trump's executive order blocking state AI regulation isn't the pro-business move it appears to be—it's a massive power grab disguised as...

Dec 15, 2025Signal/Noise

Signal/Noise 2025-12-12 Today's AI news reveals a fundamental shift in how power consolidates around artificial intelligence—not through technical supremacy, but through legal positioning and regulatory capture. While everyone debates GPT-5.2 versus Gemini 3, the real strategic moves are happening in boardrooms and government offices, where access to copyrighted content and regulatory frameworks will ultimately determine who controls the AI future. Disney's $1B Bet Reveals the New AI Moat: Legal Content, Not Better Models Disney's blockbuster licensing deal with OpenAI—$1 billion for three years plus equity investment—isn't just about Mickey Mouse in Sora videos. It's the canary in the coal mine...

Dec 14, 2025Signal/Noise

Signal/Noise 2025-12-12 Disney's $1B OpenAI deal isn't about Mickey Mouse videos—it's the moment media companies pivoted from resisting AI to weaponizing it. While everyone debates Trump's AI executive order blocking state regulations, the real story is how content owners are racing to monetize their moats before AI makes ownership meaningless. We're witnessing the great IP arbitrage: those with content libraries are cashing out while they still can. The Great IP Liquidation Sale Has Begun Disney's $1B OpenAI partnership represents the most significant shift in media strategy since Netflix went streaming-first. But strip away the Mickey Mouse headlines and you see...

Dec 11, 2025Signal/Noise

Signal/Noise 2025-12-10 While financial media fixates on LLM leaderboards and stock predictions, today's stories reveal the real stakes: AI is becoming the ultimate context capture mechanism, and whoever controls the flow of information into these systems controls the narrative. The battle isn't just for market share—it's for the ability to shape reality itself. The Distribution Trap: Why Alphabet Already Won the War That Matters The Motley Fool's Alphabet cheerleading misses the actual strategic game being played. Yes, Gemini 3.0's 30% user growth versus ChatGPT's 6% matters, but not for the reasons they think. This isn't about having the "best" LLM—it's...

Dec 10, 2025Signal/Noise

Signal/Noise 2025-12-10 While markets obsess over which LLM scores higher on benchmarks, the real AI story is playing out in two parallel universes: Google's quiet conquest of everyday workflows through product integration, and extremists turning AI into a propaganda factory. Both reveal the same truth—AI's value isn't in raw capability, but in reaching the right humans at the right moment. Google's Stealth AI Takeover: Why LLM Leaderboards Are Missing the Point Everyone's watching the wrong game. While pundits debate whether Gemini 3.0 beats ChatGPT on reasoning benchmarks, Google is executing the most obvious AI strategy that somehow everyone else missed:...