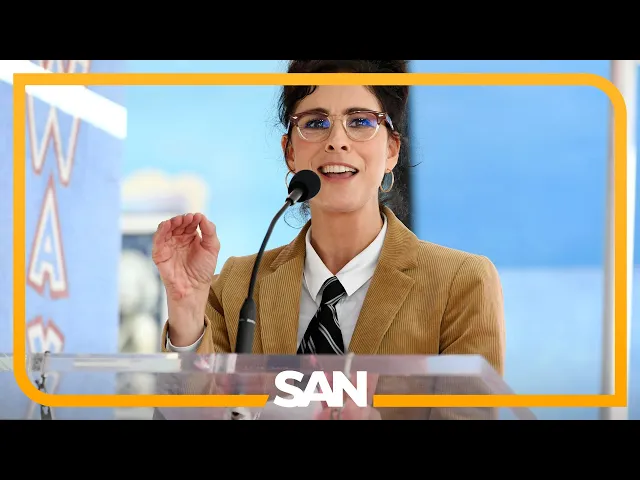

Sarah Silverman, other authors lose AI copyright case against Meta

AI copyright case against Meta stumbles

In a significant legal development that could shape the future of AI training on copyrighted works, comedian Sarah Silverman and several authors have faced a major setback in their lawsuit against Meta. The case centered on claims that Meta's large language models were trained on their copyrighted books without permission, but a federal judge has dismissed most of their claims, delivering a blow to creators concerned about AI's use of their intellectual property.

The ruling highlights the evolving intersection of copyright law and artificial intelligence, raising profound questions about what constitutes fair use in the digital age. As AI systems continue to ingest vast amounts of human-created content to improve their capabilities, this case represents just one battle in what promises to be a prolonged legal war over creative ownership in the AI era.

Key insights from the case

-

Judge Araceli Martinez-Olguin dismissed the authors' direct copyright infringement claims, ruling they failed to demonstrate that Meta's AI models actually reproduced their specific works

-

The judge left intact a claim regarding the LLaMA training dataset containing the plaintiffs' books, but dismissed claims about the models themselves containing copyrighted material

-

The ruling distinguished between the data used to train AI and the output these models produce, creating an important legal distinction that may influence future cases

Why this matters more than you think

The most significant takeaway from this ruling is the emerging legal framework distinguishing between training data and AI outputs. Judge Martinez-Olguin essentially created a bifurcated approach to AI copyright analysis: the training dataset might contain copyrighted material (still potentially actionable), but the resulting AI model itself doesn't necessarily "contain" those works in a way that constitutes copyright infringement.

This distinction carries enormous implications for the AI industry. By creating this separation between training inputs and model outputs, the court has potentially carved out a significant safe harbor for AI developers. Tech companies like Meta, Google, and OpenAI have built their models by ingesting massive amounts of text from the internet and published works. If this ruling stands and is followed by other courts, it could significantly reduce their legal exposure—a development that would accelerate AI development while potentially undermining creators' control over how their works are used.

What the ruling missed

The court's approach, while technically sound under current copyright frameworks, overlooks a

Recent Videos

Hermes Agent Master Class

https://www.youtube.com/watch?v=R3YOGfTBcQg Welcome to the Hermes Agent Master Class — an 11-episode series taking you from zero to fully leveraging every feature of Nous Research's open-source agent. In this first episode, we install Hermes from scratch on a brand new machine with no prior skills or memory, walk through full configuration with OpenRouter, tour the most important CLI and slash commands, and run our first real task: a competitor research report on a custom children's book AI business idea. Every future episode will build on this fresh install so you can see the compounding value of the agent in real time....

Apr 29, 2026Andrej Karpathy – Outsource your thinking, but you can’t outsource your understanding

https://www.youtube.com/watch?v=96jN2OCOfLs Here's what Andrej Karpathy just figured out that everyone else is still dancing around: we're not in an era of "better models." We're in a different era of computing altogether. And the difference between understanding that and not understanding it is the difference between being a vibe coder and being an agentic engineer. Last October, Karpathy had a realization. AI didn't stop being ChatGPT-adjacent. It fundamentally shifted. Agentic coherent workflows started to actually work. And he's spent the last three months living in side projects, VB coding, exploring what's actually possible. What he found is a framework that explains...

Mar 30, 2026Andrej Karpathy on the Decade of Agents, the Limits of RL, and Why Education Is His Next Mission

A summary of key takeaways from Andrej Karpathy's conversation with Dwarkesh Patel In a wide-ranging conversation with Dwarkesh Patel, Andrej Karpathy — former head of AI at Tesla, founding member of OpenAI, and creator of some of the most popular AI educational content on the internet — shared his views on where AI is headed, what's still broken, and why he's now pouring his energy into education. Here are the key takeaways. "It's the Decade of Agents, Not the Year of Agents" Karpathy's now-famous quote is a direct pushback on industry hype. Early agents like Claude Code and Codex are...